AvatarBrush

AvatarBrush: Monocular Reconstruction of Gaussian Avatars with Intuitive Local Editing#

Mengtian Li, Shengxiang Yao, Yichen Pan, Haiyao Xiao, Zhongmei Li, Zhifeng Xie, Keyu Chen

Splats

SMPL-X

Monocular

arXiv 2025

Abstract#

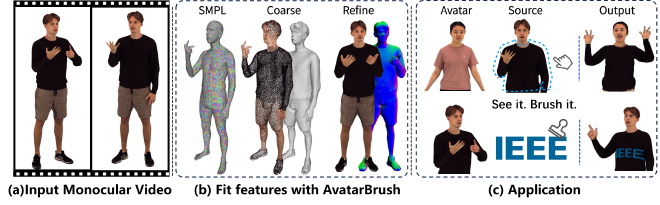

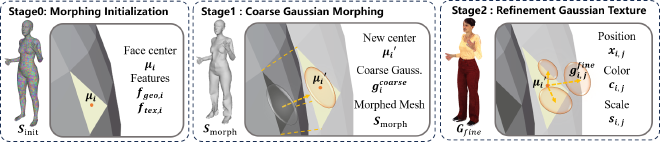

The efficient reconstruction of high-quality and intuitively editable human avatars presents a pressing challenge in the field of computer vision. Recent advancements, such as 3DGS, have demonstrated impressive reconstruction efficiency and rapid rendering speeds. However, intuitive local editing of these representations remains a significant challenge. In this work, we propose AvatarBrush, a framework that reconstructs fully animatable and locally editable avatars using only a monocular video input. We propose a three-layer model to represent the avatar and, inspired by mesh morphing techniques, design a framework to generate the Gaussian model from local information of the parametric body model. Compared to previous methods that require scanned meshes or multi-view captures as input, our approach reduces costs and enhances editing capabilities such as body shape adjustment, local texture modification, and geometry transfer. Our experimental results demonstrate superior quality across two datasets and emphasize the enhanced, user-friendly, and localized editing capabilities of our method.

PaperApproach#

Results#

Data#

Comparisons#

Performance#

Papers Published @ 2025 - This article is part of a series.

Part 24: This Article