MoCo-Flow

MoCo-Flow: Neural Motion Consensus Flow for Dynamic Humans in Stationary Monocular Cameras#

Xuelin Chen, Weiyu Li, Daniel Cohen-Or, Niloy J. Mitra, Baoquan Chen

NeRF

SMPL

Texture

Deformation

Monocular

Eurographics 2022

null

Abstract#

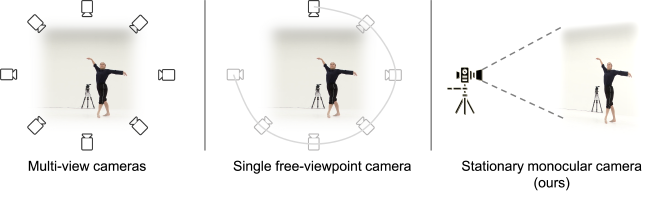

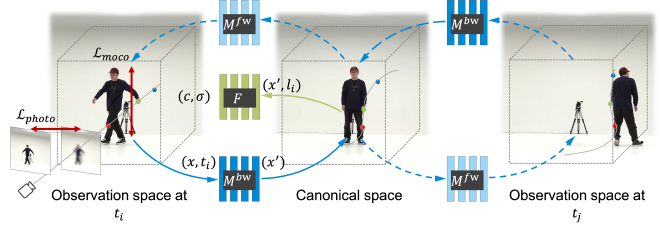

Synthesizing novel views of dynamic humans from stationary monocular cameras is a popular scenario. This is particularly attractive as it does not require static scenes, controlled environments, or specialized hardware. In contrast to techniques that exploit multi-view observations to constrain the modeling, given a single fixed viewpoint only, the problem of modeling the dynamic scene is significantly more under-constrained and ill-posed. In this paper, we introduce Neural Motion Consensus Flow (MoCo-Flow), a representation that models the dynamic scene using a 4D continuous time-variant function. The proposed representation is learned by an optimization which models a dynamic scene that minimizes the error of rendering all observation images. At the heart of our work lies a novel optimization formulation, which is constrained by a motion consensus regularization on the motion flow. We extensively evaluate MoCo-Flow on several datasets that contain human motions of varying complexity, and compare, both qualitatively and quantitatively, to several baseline methods and variants of our methods.

PaperApproach#

MoCo-Flow teaser.

MoCo-Flow overview.Results#

Data#

Comparisons#

Papers Published @ 2022 - This article is part of a series.

Part 28: This Article