ReLoo

ReLoo: Reconstructing Humans Dressed in Loose Garments from Monocular Video in the Wild#

Chen Guo, Tianjian Jiang, Manuel Kaufmann, Chengwei Zheng, Julien Valentin, Jie Song, Otmar Hilliges

NeRF

SMPL

Deformation

Clothing

ECCV 2024

null

Abstract#

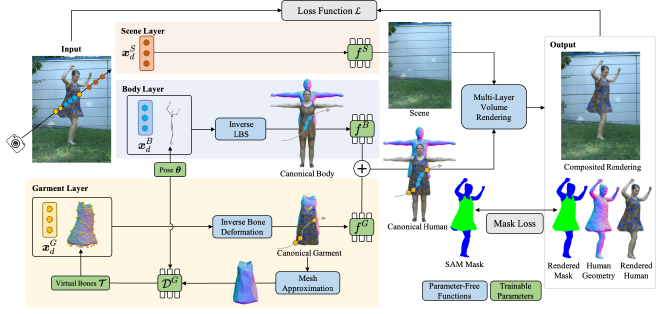

While previous years have seen great progress in the 3D reconstruction of humans from monocular videos, few of the state-of-the-art methods are able to handle loose garments that exhibit large non-rigid surface deformations during articulation. This limits the application of such methods to humans that are dressed in standard pants or T-shirts. We present ReLoo , a novel method that overcomes this limitation and reconstructs high-quality 3D models of humans dressed in loose garments from monocular in-the-wild videos. To tackle this problem, we first establish a layered neural human representation that decomposes clothed humans into a neural inner body and outer clothing. On top of the layered neural representation, we further introduce a non-hierarchical virtual bone deformation module for the clothing layer that can freely move, which allows the accurate recovery of non-rigidly deforming loose clothing. A global optimization is formulated that jointly optimizes the shape, appearance, and deformations of both the human body and clothing over the entire sequence via multi-layer differentiable volume rendering. To evaluate ReLoo, we record subjects with dynamically deforming garments in a multi-view capture studio. The evaluation of our method, both on existing and our novel dataset, demonstrates its clear superiority over prior art on both indoor datasets and in-the-wild videos.

PaperApproach#

ReLoo overview.

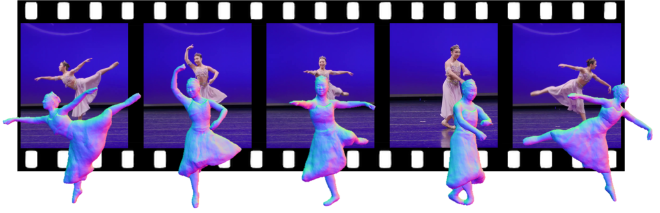

ReLoo overview.Results#

Data#

Comparisons#

Papers Published @ 2024 - This article is part of a series.

Part 40: This Article