STG-Avatar

STG-Avatar: Animatable Human Avatars via Spacetime Gaussian#

Guangan Jiang, Tianzi Zhang, Dong Li, Zhenjun Zhao, Haoang Li, Mingrui Li, Hongyu Wang

Splats

SMPL

Monocular

arXiv 2025

null

Abstract#

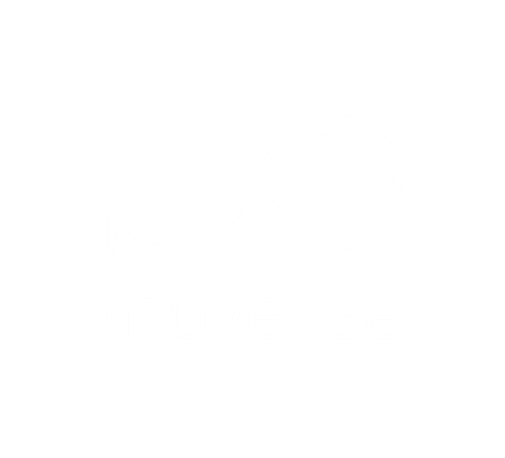

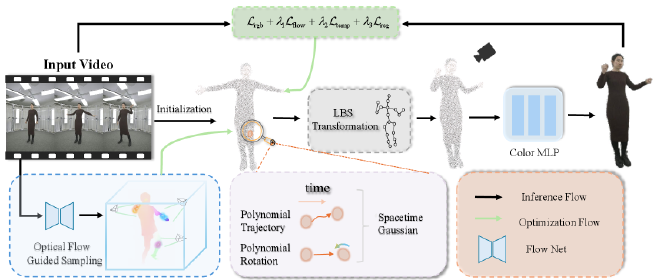

Realistic animatable human avatars from monocular videos are crucial for advancing human-robot interaction and enhancing immersive virtual experiences. While recent research on 3DGS-based human avatars has made progress, it still struggles with accurately representing detailed features of non-rigid objects (e.g., clothing deformations) and dynamic regions (e.g., rapidly moving limbs). To address these challenges, we present STG-Avatar, a 3DGS-based framework for highfidelity animatable human avatar reconstruction. Specifically, our framework introduces a rigid-nonrigid coupled deformation framework that synergistically integrates Spacetime Gaussians (STG) with linear blend skinning (LBS). In this hybrid design, LBS enables real-time skeletal control by driving global pose transformations, while STG complements it through spacetimeadaptive optimization of 3D Gaussians. Furthermore, we employ optical flow to identify high-dynamic regions and guide the adaptive densification of 3D Gaussians in these regions. Experimental results demonstrate that our method consistently outperforms state-of-the-art baselines in both reconstruction quality and operational efficiency, achieving superior quantitative metrics while retaining real-time rendering capabilities.

PaperApproach#

STG-Avatar overview.

STG-Avatar details.Results#

Data#

Comparisons#

Performance#

Papers Published @ 2025 - This article is part of a series.

Part 29: This Article